Fallacies Under Pressure: Identifying, Countering, and Preventing Faulty Reasoning in Everyday Life and Intelligence Analysis

A practitioner-scholar guide to recognition, defense, mitigation, and micro-protocols for disciplined judgment

Abstract

Critical thinking is not merely the critique of others’ arguments; it is the disciplined examination of one’s own reasoning under conditions of uncertainty, time pressure, and social influence. This article introduces fallacies as recurring defects in reasoning that can mislead both everyday judgment and high-stakes professional analysis. It begins with the basic problem of identification, then explains major categories of fallacies, shows how they differ from cognitive biases, and outlines practical methods for defending against them. The discussion is designed for both academics and practitioners, with particular attention to intelligence analysis, where the costs of poor reasoning may include distorted assessments, premature closure, false confidence, and policy error. The article argues that fallacy recognition is most effective when paired with explicit assumptions checks, alternative analysis, calibrated confidence language, and repeatable micro-protocols that can be used in meetings, briefings, classrooms, investigations, and daily decisions. The goal is not merely to label bad arguments, but to strengthen judgment by making evidence, assumptions, uncertainty, and alternatives visible. (Facione, 1990; Hansen, 2024; Heuer, 1999; Office of the Director of National Intelligence [ODNI], 2015). (Queensborough Community College)

Keywords

critical thinking; logical fallacies; informal fallacies; intelligence analysis; analytic tradecraft; bias mitigation; structured analytic techniques; micro-protocols

Introduction

Critical thinking is best understood as purposeful, self-regulatory judgment. In the classic Delphi consensus, it includes interpretation, analysis, evaluation, inference, explanation, and self-regulation, and it is tied not only to skill but also to habits such as curiosity, fairness, prudence, and a willingness to reconsider. Fallacy study belongs inside that broader project because fallacies are among the most common ways reasoning goes wrong while still sounding persuasive. A person may speak confidently, cite sources, or appeal to shared values and still offer an argument that is weak, misleading, or structurally defective. To learn fallacies well, then, is not to memorize names for classroom quizzes; it is to become more reliable in judgment. (Facione, 1990; Hansen, 2024; Tindale, 2007). (Queensborough Community College)

The need becomes sharper in intelligence and other high-consequence professions. Analysts work with incomplete and ambiguous information, and formal standards for analytic products require rigor, objectivity, explicit uncertainty, the separation of assumptions from judgments, and the consideration of plausible alternatives. In such settings, fallacies are not merely rhetorical errors; they become tradecraft failures when a conclusion outruns the evidence, when assumptions are smuggled in as facts, or when attractive narratives crowd out competing explanations. Good reasoning under pressure must therefore be disciplined, not improvised. (Heuer, 1999; ODNI, 2015). (CIA)

This article proceeds in a practical sequence. It defines fallacies, distinguishes them from biases, explains core categories, offers diagnostic questions for identification, and then moves to defense, mitigation, and micro-protocols. Throughout, the emphasis is on application: how to think better in conversations, classrooms, research, investigations, and intelligence products.

What Counts as a Fallacy?

A fallacy is not just any disagreement, unpopular opinion, or weak conclusion. In argument theory, fallacies are recurring mistakes in reasoning that either appear stronger than they are or violate the standards of good argument. They often gain traction because they resemble real reasoning closely enough to pass unnoticed. That is why learning fallacies matters: they are not random errors but patterned ones. (Hansen, 2024; Tindale, 2007). (Stanford Encyclopedia of Philosophy)

It is also important to distinguish fallacies from cognitive biases. A fallacy is primarily a defect in an argument. A cognitive bias is a predictable tendency in human judgment. The two overlap constantly. Confirmation bias, for example, can make a person more vulnerable to cherry-picking, false cause reasoning, and one-sided interpretation. But the distinction remains useful: one concerns the quality of the reasoning presented; the other concerns the mental tendencies shaping it. In practice, disciplined thinkers look for both. (Hansen, 2024; Heuer, 1999). (Stanford Encyclopedia of Philosophy)

A practical way to analyze any argument is to separate three elements: the claim, the evidence, and the warrant that connects the evidence to the claim. In intelligence tradecraft, this corresponds closely to the requirement to distinguish underlying information from assumptions and judgments. When analysts fail to make those distinctions explicit, fallacies hide in the seams. A conclusion starts to look “obvious” not because it is well supported, but because the unstated assumptions were never exposed to scrutiny. (ODNI, 2015; Tindale, 2007; Facione, 1990). (ODNI)

Core Categories of Fallacies

The broadest initial distinction is between formal and informal fallacies. Formal fallacies are errors in logical form, such as affirming the consequent or denying the antecedent. Their defect lies in structure: even if the premises were true, the conclusion would not follow validly. Informal fallacies are more common in natural language and professional discourse. Their defect lies in relevance, sufficiency, ambiguity, presumption, or misuse of evidence. In real life, people encounter informal fallacies far more often than textbook syllogistic errors. (Hansen, 2024). (Stanford Encyclopedia of Philosophy)

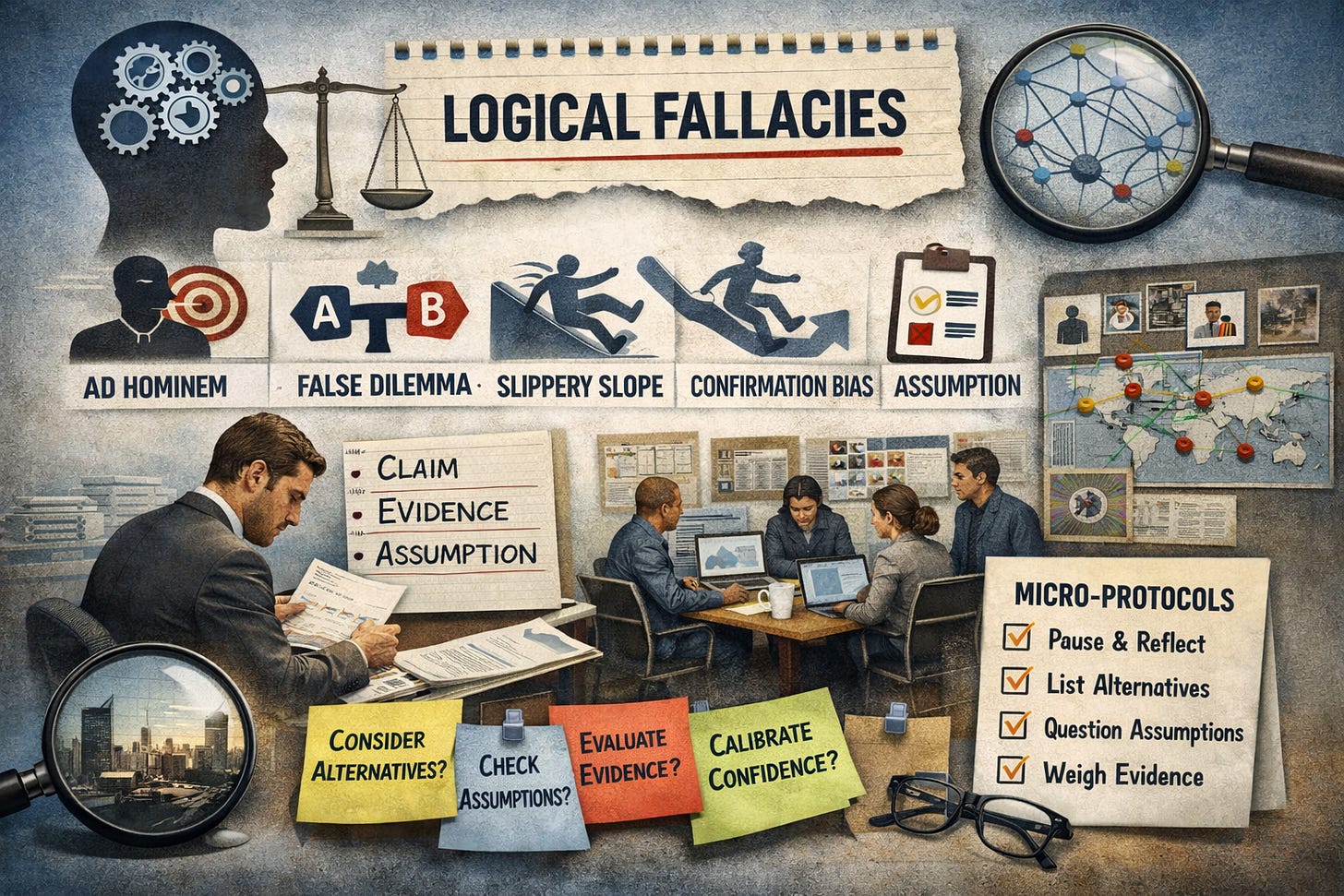

One useful working taxonomy groups informal fallacies into four large families. The first is relevance fallacies, in which the premises are psychologically powerful but logically off target. Ad hominem attacks, appeals to emotion, red herrings, and some appeals to authority belong here. The second is presumption fallacies, where the argument assumes what must still be proven. False dilemmas, begging the question, loaded questions, and suppressed alternatives are familiar examples. The third is ambiguity fallacies, where vagueness, equivocation, or shifting meanings create the illusion of support. The fourth is weak induction and causal fallacies, where the evidence is too thin, too selective, or too poorly connected to warrant the conclusion. Hasty generalization, false cause, post hoc reasoning, weak analogy, and slippery slope arguments often operate in this space. (Hansen, 2024; Tindale, 2007). (Stanford Encyclopedia of Philosophy)

For practitioners, the important point is not taxonomic perfection but functional recognition. When a speaker attacks a source instead of the evidence, narrows a field of possibilities too early, shifts the meaning of a key term, or treats sequence as causation, reasoning quality has been compromised. Naming the fallacy can be useful, but diagnosis matters more than vocabulary. The serious question is always: What, exactly, has gone wrong in the movement from premises to conclusion? (Tindale, 2007). (Cambridge University Press & Assessment)

How to Identify a Fallacy

Fallacy recognition starts with disciplined questioning. The following diagnostic questions are simple, but they are powerful precisely because they force reasoning into the open:

What is the exact claim being made?

What evidence is being offered for it?

What assumption connects that evidence to the conclusion?

Are key alternatives being ignored or caricatured?

Is the language precise, or does it shift in meaning?

What would count as disconfirming evidence?

These questions map closely onto core critical-thinking skills and to analytic tradecraft that requires explicit assumptions, alternatives, and uncertainty. (Facione, 1990; ODNI, 2015). (Queensborough Community College)

There are also common warning signs. Be cautious when you hear phrases such as “everyone knows,” “it’s obvious,” “there are only two options,” “after that happened, this happened,” or “you can’t trust that person.” Such cues do not prove a fallacy by themselves, but they often signal that reasoning is being compressed, dramatized, or smuggled past scrutiny. A wise analyst treats these as prompts to slow down, not as verdicts. (Hansen, 2024; Tindale, 2007). (Stanford Encyclopedia of Philosophy)

One further caution matters. Not every bad argument fits neatly into a named fallacy, and naming a fallacy is not the same as refuting an argument. Good analysis does not stop at “that’s a straw man” or “that’s post hoc.” It explains why the reasoning fails, what a better standard would be, and what stronger evidence would be required. This moves the discussion from scorekeeping to disciplined inquiry.

Defense Against Fallacies in Daily Life

Defending against fallacies begins with defending against one’s own momentum. Human working memory is limited; people cannot keep all the relevant pros, cons, hypotheses, and caveats in mind at once. Heuer’s work on intelligence analysis emphasizes decomposition and externalization for exactly this reason: getting the problem onto paper, into a matrix, or into a visible structure helps prevent premature closure and one-track interpretation. In daily life, this can be as simple as writing down the claim, the evidence, and the strongest alternative before deciding. (Heuer, 1999). (CIA)

A second defense is deliberate disconfirmation. The natural impulse is to search for support for the view one already favors. Yet sound reasoning improves when one actively asks, “What would make this claim weaker?” or “What evidence would overturn my preferred interpretation?” Intelligence tradecraft explicitly recommends assumptions checks, alternative analysis, and techniques that keep analysts open to unlikely but plausible explanations. The same discipline works in ordinary conversations, research design, hiring, leadership, and policy debate. (Central Intelligence Agency [CIA], 2009; Heuer, 1999; ODNI, 2015). (CIA)

A third defense is intellectual humility. The Dunning-Kruger literature remains a useful reminder that people who lack competence in a domain may also lack the metacognitive ability to recognize that deficiency. This matters for fallacies because overconfidence often protects weak arguments from revision. The antidote is not self-doubt for its own sake, but calibration: matching confidence to evidence, complexity, and uncertainty. A strong thinker is not the loudest one in the room, but the one whose certainty moves when the evidence moves. (Kruger & Dunning, 1999; Facione, 1990). (PubMed)

Mitigation in Professional and Intelligence Settings

In professional analysis, fallacy mitigation should not depend on individual brilliance alone. It should be built into process. ODNI analytic standards require analysts to describe source quality, explain uncertainty, distinguish information from assumptions and judgments, assess plausible alternatives, and use clear logical argumentation. Those are not bureaucratic niceties. They are safeguards against the most common reasoning failures: evidentiary inflation, disguised assumption, false certainty, and narrative monopoly. (ODNI, 2015). (ODNI)

Structured analytic techniques are helpful because they force visibility. CIA’s tradecraft guidance recommends brainstorming, key assumptions checks, devil’s advocacy, red teaming, deception detection, analysis of competing hypotheses, and alternative futures work. These tools are valuable not because they guarantee truth, but because they interrupt default cognition. They help analysts challenge assumptions, consider multiple hypotheses, and avoid taking the current analytic line for granted. (CIA, 2009). (CIA)

Still, methods are only scaffolding. Heuer is explicit that no analytic procedure guarantees correctness; judgment remains fallible because it is applied to incomplete and ambiguous information. The lesson is sobering but healthy: techniques reduce exposure to error, yet they do not eliminate the need for disciplined thought, reflective review, and honest uncertainty. In practice, this means teams should evaluate reasoning quality, not just final conclusions, and leaders should protect contestation rather than mistake consensus for rigor. (Heuer, 1999; ODNI, 2015). (CIA)

In intelligence analysis especially, four fallacy traps recur. First, appeal to authority emerges when senior opinion substitutes for evidence. Second, false dilemma appears when complex geopolitical possibilities are compressed into binary choices. Third, post hoc reasoning treats sequence as cause. Fourth, ad hominem dismissal leads analysts to disregard a source or claim solely because of political alignment or institutional dislike. None of these errors is exotic. All are common under pressure. What makes them dangerous is that they often arrive dressed as efficiency, realism, or decisive leadership.

Micro-Protocols for Daily Life and Professional Practice

The most practical way to operationalize fallacy resistance is through small, repeatable routines. Structured tradecraft already points in this direction by emphasizing assumptions checks, alternatives, explicit uncertainty, and visible reasoning. What follows is a micro-protocol library designed for daily life, classrooms, investigations, leadership meetings, and intelligence work. (CIA, 2009; Heuer, 1999; ODNI, 2015). (CIA)

The 30-Second Pause

Before agreeing, forwarding, briefing, or deciding, pause and ask: What am I treating as settled too quickly? This interrupts emotional velocity.Claim-Evidence-Assumption Split

Write three lines: What is the claim? What is the evidence? What assumption connects them? If the assumption is doing all the work, the argument is weak.Two Disconfirmers Rule

Name two observations that would seriously weaken your preferred conclusion. If you cannot name any, you are probably protecting a narrative, not testing one.Alternative Frame Drill

Force yourself to produce at least one rival explanation and one non-obvious possibility. In intelligence work, this can be a mini alternative-analysis exercise; in daily life, it may simply prevent overreaction.Language Hygiene Check

Replace words such as obviously, always, never, proves, and everyone knows with more precise terms. Inflated language often hides weak support.Steelman Before Critique

State the strongest version of the opposing position before you reject it. This protects against straw man reasoning and sharpens your own analysis.Confidence Note

Add one sentence: My confidence is low/moderate/high because… Then add: What would change my mind is… This keeps confidence tied to evidence.Source Position Check

Ask: What does this source actually know? What might this source want? What is the source well placed to observe, and what is it not well placed to infer? This reduces both gullibility and cynical dismissal.Post-Decision Review

After a decision or finished product, ask: What did I assume? What did I miss? What surprised me? Reflection converts experience into improved judgment.

Taken together, these micro-protocols translate established critical-thinking moves into habits that are usable under time pressure. They align with the broader aim of critical thinking as self-regulatory judgment and with intelligence tradecraft that emphasizes explicit assumptions, alternatives, disconfirming evidence, and calibrated uncertainty. (CIA, 2009; Facione, 1990; Heuer, 1999; ODNI, 2015). (CIA)

Conclusion

Fallacies are not marginal curiosities in logic. They are recurring threats to judgment wherever people must interpret evidence, defend claims, advise decision-makers, or make sense of complexity. For academics, fallacy study deepens argument evaluation and strengthens scholarly rigor. For practitioners, it reduces the chance that confidence, rhetoric, hierarchy, or urgency will outrun evidence. For intelligence professionals, it is inseparable from analytic integrity. The real task is not merely to spot errors in others, but to build habits that make one’s own reasoning more transparent, revisable, and defensible. Critical thinking matures when analysts and citizens alike learn to separate information from assumption, hold alternatives in view, express uncertainty honestly, and make reflection a routine rather than a luxury. In that sense, resisting fallacies is not just a logical exercise. It is part of the craft of serious judgment. (Facione, 1990; Hansen, 2024; Heuer, 1999; ODNI, 2015). (Queensborough Community College)

References

Central Intelligence Agency, Center for the Study of Intelligence. (2009). A tradecraft primer: Structured analytic techniques for improving intelligence analysis.

Facione, P. A. (1990). Critical thinking: A statement of expert consensus for purposes of educational assessment and instruction (The Delphi Report). California Academic Press.

Hansen, H. V. (2024). Fallacies. In E. N. Zalta & U. Nodelman (Eds.), The Stanford Encyclopedia of Philosophy (Fall 2024 ed.). Metaphysics Research Lab, Stanford University.

Heuer, R. J., Jr. (1999). Psychology of intelligence analysis. Center for the Study of Intelligence, Central Intelligence Agency.

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it: How difficulties in recognizing one’s own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77(6), 1121–1134. DOI: 10.1037/0022-3514.77.6.1121

Office of the Director of National Intelligence. (2015). Intelligence Community Directive 203: Analytic standards.

Tindale, C. W. (2007). Fallacies and argument appraisal. Cambridge University Press. DOI: 10.1017/CBO9780511806544

Author Bio

Dr. Charles M. Russo, Ph.D., is a retired FBI Intelligence Analyst, U.S. Navy combat veteran, educator, and scholar-practitioner with more than three decades of experience across intelligence, public safety, criminal justice, and higher education. His work focuses on analytic tradecraft, crime and intelligence analysis, critical thinking, and the translation of theory into practical tools for professionals who must make high-stakes judgments under pressure. He writes for analysts, educators, and public-safety leaders seeking disciplined, ethical, and defensible reasoning in complex environments.

Disclaimer

This article is intended for educational and professional development purposes only. It does not represent the official views of any current or former agency, institution, or employer. Examples are illustrative and generalized. Readers should apply the concepts presented here in a manner consistent with applicable law, policy, ethics, and professional standards.